Price and competitive monitoring is the fastest-growing segment of the web scraping market, rising at a 19.8% CAGR as retailers rely on real-time competitor data for dynamic pricing strategies. Building a pipeline that actually holds up under that pressure, though, requires more than a few scrapers and a cron job.

The real challenge is infrastructure. Specifically: staying connected to the sites you need to monitor without hitting blocks, CAPTCHA, or rate limits that corrupt your data before it reaches your database.

Why Standard Scraping Setups Break Down at Scale

Most teams start small — a Python script, a handful of target URLs, and a shared datacenter IP. That works fine for dozens of requests a day. It stops working the moment the target site notices a pattern.

The problem is that datacenter IPs are easy to fingerprint. They come from known hosting ranges, they don’t look like organic user traffic, and large e-commerce platforms — Amazon, Walmart, Zalando — have bot detection that flags them almost immediately. As a result, your scraper returns empty pages, incorrect prices, or soft blocks that look like valid responses until you check the data.

Residential proxies fix this at the source. Each request exits through a real consumer IP address, assigned by an ISP, in a specific location. From the target site’s perspective, the traffic looks like a regular user browsing from Berlin or São Paulo.

What a Solid Price Monitoring Pipeline Actually Needs

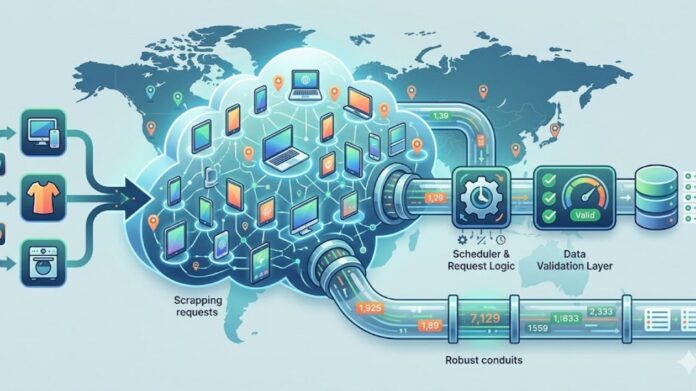

A production-grade price monitoring setup has three layers that have to work together, and each can become the weak link if it’s not properly configured.

Proxy Layer

The proxy layer is where most pipelines fail or succeed. A few things matter here:

- Pool size and diversity: A large residential pool means your requests are distributed across many unique IPs, which reduces the chance of pattern detection. A pool of 90M+ IPs across 195 countries gives you enough room to run concurrent high-volume jobs without exhausting your rotation.

- Geo-targeting precision: Price monitoring often requires city- or ZIP-level targeting, especially for products that vary by region. Proxies need to support country, state, city, and ASN-level targeting to get accurate localized results.

- Session control: Some workflows — like monitoring a single product page across a session — need sticky IPs. Others, like scraping paginated category listings, are better served with rotating IPs on every request. Your proxy provider needs to support both.

For teams running this in production, a proxy provider like DataImpulse covers all three requirements: a 90M+ IP residential pool, granular geo-targeting down to ZIP/ASN, and configurable rotating or sticky sessions on the same infrastructure.

Scheduler and Request Logic

Even with good proxies, unthrottled request rates trigger alarms. A well-designed pipeline controls its own pace. Practical approaches include:

- Jittered delays: Randomizing time between requests (e.g., 1–4 seconds) avoids the signature of automated loops.

- Request batching by target domain: Group requests to the same domain and distribute them across a time window to stay below rate limits.

- Retry logic with exponential backoff: On a 429 or soft block, wait, rotate the proxy, and retry — don’t just fail.

Data Validation Layer

Raw price data coming from scrapers is noisy. Prices get stripped of currency symbols, rendered client-side via JavaScript, or returned in locale-specific formats. A validation layer should normalize formats, flag anomalies (a product suddenly 90% cheaper is probably a parse error), and log parse failures separately from block events.

Keep the Pipeline Running: Cost and Maintenance

One operational problem teams run into with monthly proxy plans is unused bandwidth. If your scraping is seasonal or campaign-driven, you’re paying for capacity you don’t use. Pay-as-you-go pricing — where you buy bandwidth by the GB and it doesn’t expire — aligns much better with irregular scraping schedules.

Beyond billing structure, maintenance overhead is worth planning for. E-commerce sites in particular update layouts, introduce new bot detection layers, and increasingly serve prices via client-side JavaScript rather than static HTML — meaning a scraper that worked last month may silently return empty fields today rather than throwing an obvious error.

Building in a monitoring step that flags parse failures separately from proxy blocks makes it much faster to diagnose which layer of the pipeline actually broke.

From Pipeline to Decision-Making

A reliable price monitoring pipeline is the foundation for real competitive intelligence. Once the data is clean and consistent, teams can move to delta tracking (alerting when a competitor changes price), trend analysis, and integrating pricing signals into their own repricing logic or ML models. The proxy layer is what makes that possible — get it wrong, and everything downstream is quietly corrupted.