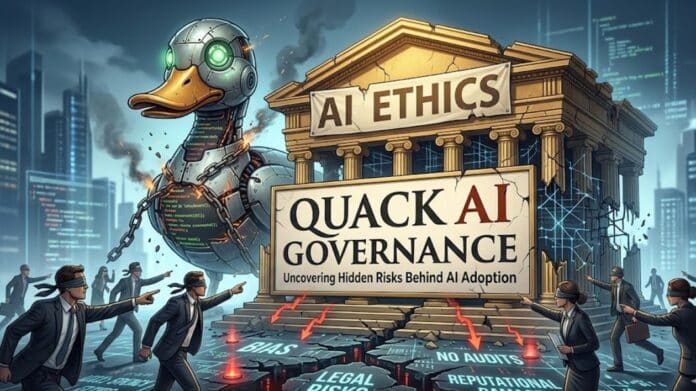

Artificial Intelligence is no longer a futuristic idea. It became a part of the business industry, from recruitment and customer service to health care and finance. However, A disturbing phenomenon comes with the increased use of AI in organisations. This is the concept of Quack AI Governance. It is very crucial to learn about this phenomenon in today’s time, especially for businesses.

Similar to quack medicine, which is a remedy with no scientific evidence, Quack AI Governance gives the illusion of taking charge. In their perception, companies think they are handling AI risks. But in reality, they are overlooking key vulnerabilities without even knowing about them. This disparity can lead to serious ethical, legal, and operational consequences. Let’s delve deeper into this for a better understanding of the issues and risks.

What is Quack AI Governance?

Quack AI Governance is a set of governance structures that are more performative than practical. This situation occurs when organisations issue policies, guidelines or ethical principles, but seldom incorporate them into real workflow or decision-making.

These frameworks are usually theoretical rather than practical. They can be impressive in reports or marketing documents, but they do not impact the process of the construction, testing, or implementation of AI systems. The effect is a paper-based but not a working system of governance.

The Main Features of Quack AI Governance

The difference between intention and execution is one of the most indicative features of Quack AI Governance. Numerous organisations sincerely try to become responsible AI users, yet they fail to do so. Take a look at the main characteristics for a better understanding:

- Enforcement: Depending on the nature of their work, companies can design comprehensive AI ethics policies, but still do not develop monitoring mechanisms, audits, or penalties. This makes governance a mere ritual but not a working system.

- Communication with Buzzwords: The concepts, such as ethical AI or responsible AI, are often there without any definition or quantifiable parameters. These expressions enter into branding and not operational reality.

- Poor Transparency: AI systems often function as empty boxes. Companies don’t put much effort into applying their decision-making process and relevant data. This creates a sense of ambiguity and diminishes confidence.

- Lack of Ownership: When there is no person or a group in charge of AI, problems are bound to slip through the cracks. Accountability becomes unclear, and risks emerge.

- Bias and Risk: AI systems may unwillingly support discrimination or yield unreliable results, without adequate testing and validation.

Why Quack AI Governance is Dangerous

Understanding the root causes of Quack AI Governance is essential for avoiding it. The superficial governance harms different aspects of business. Users lose trust in AI systems that act in an unpredictable manner. It is more apparent in spheres like finance or healthcare, where decision-making is highly serious.

There are also serious legal implications. With more stringent AI policies in place, organisations with weak governance systems become non-compliant. This may result in fines, lawsuits and long-term reputational damages. The stakes are even greater ethically. Poor governance can result in biased hiring tools, discriminatory credit scoring systems, or invasive surveillance technologies. Such failures not only affect business, but they also affect lives.

Why Organizations Fall Into This Trap

It is crucial to understand the underlying causes of Quack AI Governance to prevent these issues. The issue is not deliberate negligence in most cases, but structural and cultural issues. One of the key factors is the shortage of expertise. The AI governance is a blend of technical, legal, and ethical expertise. This is not yet widespread in many organisations. Consequently, teams with less expertise often handle the task of governance.

The other important problem is coercion to be innovative. Nowadays, businesses are eager to deploy AI solutions and gain a competitive advantage. This can push governance considerations to the background.

Also, the leadership and technical teams are often not in sync. On one hand, executives only pay attention to the perception of the population and branding. On the other hand, developers have no proper instructions on how to apply the principles of governance in practice. This combination is a recipe for disaster in the aspect of governance.

How to Avoid Quack AI Governance

The elimination of superficial governance requires a shift in mindset. Organisations should treat governance as an integral part of the AI lifecycle rather than an afterthought. Here are some ways to avoid quack AI governance:

- Construction of Practical Frameworks: Governance policies must have practical steps, quantifiable targets and a success methodology.

- Instituting Ownership: Companies should designate teams or positions of AI management, with accountability on all levels.

- Ongoing Check: The developers should periodically check AI systems on performance, bias and drift, and not just at the time of launch.

- Enhancing Transparency: Organisations need to take notes on how AI systems operate and explain the same where feasible.

- Performing Risk Evaluation: The developers and the quality assessment team should check AI systems before deployments, depending on their degree of risk.

- Investment in Education: Staff at every level should stay aware of how AI functions and the ethical and governance concerns related to it.

Real-World Examples of Quack AI Governance

Quack AI Governance can be subtle but impactful. Companies might think that they are doing the right thing, but their actions tell otherwise. Here are some common patterns:

- Publishing an “AI Ethics Charter” without enforcing it internally

- Claiming that AI models are fair or unbiased without conducting rigorous testing

- Deploying AI tools in sensitive areas like recruitment without testing outcomes

- Treating governance as a one-time compliance task rather than an ongoing process

These instances show a burning problem. Governance appears as a checkbox and not a constant responsibility.

FAQs

1. What is Quack AI Governance?

It is a shallow form of AI governance that is not actually in practice, not accountable, or effective.

2. Why is Quack AI Governance a problem?

Quack AI Governance can be a major issue as it gives an illusion of safety and does not resolve serious ethical, legal and operational issues.

3. What are the ways organisations can avoid Quack AI Governance?

Organisations can avoid it through practical policies and constant checks of AI systems.